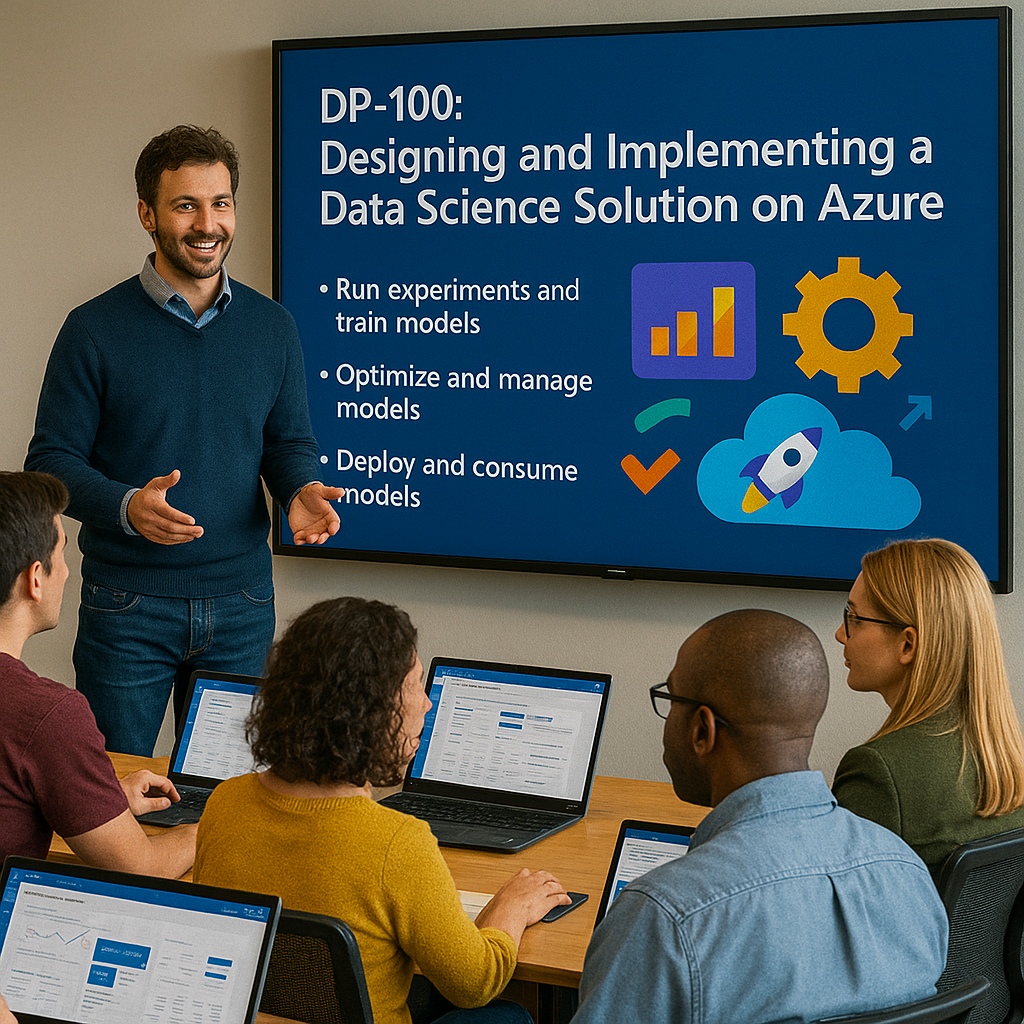

DP-100 Designing and implementing a data science solution on Azure Certification: Microsoft Certified: Azure Data Scientist Associate

Dynamics Edge courses and labs are enhanced Instructor-Led Training (ILT) materials designed specifically for live, guided instruction and follow a structured curriculum.

Our materials are intentionally different from Microsoft Learn paths in both structure and flow to better prepare for actual work, answer questions, real-time engagement, and deeper learning. Microsoft Learn paths are self-paced study resources.

You will Learn:

Create and Manage Azure Machine Learning Workspaces

Prepare and Ingest Data for Machine Learning

Use Automated Machine Learning (AutoML)

Train and Track Models Using Scripts and MLflow

Perform Hyperparameter Tuning and Model Optimization

Deploy and Monitor Machine Learning Models

Apply Responsible AI Principles

Course outline

Module 1: Explore Azure Machine Learning Workspace Resources and Assets

Create an Azure Machine Learning workspace

Identify workspace resources and their purposes

Explore Azure Machine Learning assets (datasets, models, environments)

Train and manage models within the workspace

Understand asset tracking and versioning

Module 2: Explore Developer Tools for Workspace Interaction

Use Azure Machine Learning Studio for no-code interaction

Access and manage resources using the Python SDK

Automate tasks using the Azure CLI

Understand tool interoperability within the workspace

Choose the right tool based on use case

Module 3: Make Data Available in Azure Machine Learning

Understand the role of URIs in data access

Create and configure datastores for data storage

Create reusable data assets for experiments

Manage dataset versioning and access control

Organize data for scalable ML workflows

Module 4: Work with Compute Targets in Azure Machine Learning

Choose between compute instance, cluster, or attached compute

Create and use a development compute instance

Scale workloads using compute clusters

Optimize resource allocation for training jobs

Monitor compute usage and performance

Module 5: Work with Environments in Azure Machine Learning

Understand Azure ML environments and dependencies

Use curated environments for rapid experimentation

Create custom environments with Conda or Docker

Register and reuse environments across jobs

Manage environment versions for reproducibility

Module 6: Find the Best Classification Model with Automated Machine Learning

Preprocess data and configure featurization settings

Launch an AutoML classification experiment

Track progress and results through the UI and SDK

Evaluate models using built-in metrics

Select the best-performing model for deployment

Module 7: Track Model Training in Jupyter Notebooks with MLflow

Configure MLflow for local and remote tracking

Train models in notebooks using MLflow APIs

Log parameters, metrics, and artifacts

View experiment results and history

Use MLflow UI for model comparison

Module 8: Run a Training Script as a Command Job in Azure Machine Learning

Convert a Jupyter notebook to a Python script

Submit a script as a command job

Pass parameters and inputs to the training job

Monitor job execution and outputs

Use scripts for repeatable and scalable ML runs

Module 9: Track Model Training with MLflow in Jobs

Log metrics and artifacts using MLflow

View job metrics in Azure Machine Learning UI

Evaluate model performance across runs

Compare jobs and select optimal models

Integrate MLflow with sweep jobs and pipelines

Module 10: Perform Hyperparameter Tuning with Azure Machine Learning

Define hyperparameter search space

Choose a sampling strategy (random, grid, Bayesian)

Set early termination policies for efficiency

Launch sweep jobs to optimize model parameters

Analyze tuning results and select best model

Module 11: Run Pipelines in Azure Machine Learning

Create reusable pipeline components

Chain components together into a pipeline

Submit pipeline jobs for orchestration

Manage pipeline versions and dependencies

Monitor pipeline runs and outputs

Module 12: Register an MLflow Model in Azure Machine Learning

Log trained models with MLflow

Understand the MLflow model format and structure

Register a model in the Azure Machine Learning registry

Track model versions and metadata

Prepare models for deployment and monitoring

Module 13: Create and Explore the Responsible AI Dashboard

Understand the importance of Responsible AI

Create the Responsible AI dashboard for a trained model

Evaluate model fairness, explainability, and error analysis

Interpret dashboard insights for decision-making

Use the dashboard to improve model transparency

Module 14: Deploy a Model to a Managed Online Endpoint

Understand managed online endpoint architecture

Deploy an MLflow model to an online endpoint

Deploy custom models to endpoints

Test and validate endpoint functionality

Monitor endpoint status and usage

Module 15: Deploy a Model to a Batch Endpoint

Understand batch inference and use cases

Create and configure batch endpoints

Deploy MLflow or custom models to batch endpoints

Invoke batch jobs and analyze output

Troubleshoot batch deployment issues

Module 16: Introduction to Azure AI Foundry

Define what Azure AI Foundry is

Explore how Azure AI Foundry integrates with ML workflows

Understand AI Foundry’s capabilities in managing models

Identify when and why to use AI Foundry

Compare AI Foundry to other model management tools

Module 17: Explore and Deploy Models from the Model Catalog

Navigate the Azure AI Foundry model catalog

Explore available foundation and language models

Deploy catalog models to endpoints

Enhance model performance using tuning options

Monitor usage and outcomes of deployed models

Module 18: Get Started with Prompt Flow

Understand the development lifecycle for LLM apps

Explore core components and flow types

Connect to data sources using prompt flow connections

Configure runtimes and variants

Monitor and iterate on flow performance

Module 19: Build a RAG-Based Agent with Your Own Data

Understand Retrieval-Augmented Generation (RAG) principles

Prepare and index your data for searchability

Ground a language model using your own data

Build an agent using prompt flow in Azure AI Foundry

Evaluate grounded model outputs for accuracy

Module 20: Fine-Tune a Language Model

Identify when fine-tuning is appropriate

Prepare training data for fine-tuning chat models

Use Azure AI Studio to configure fine-tuning jobs

Deploy fine-tuned models to endpoints

Monitor fine-tuning metrics and results

Module 21: Evaluate the Performance of Generative AI Apps

Set benchmarks for model evaluation

Perform manual evaluations of model output

Use metrics to assess model quality and responsiveness

Compare app iterations to improve performance

Integrate evaluation into the development cycle

Module 22: Responsible Generative AI

Plan a responsible generative AI solution lifecycle

Identify and document potential harms (bias, toxicity, etc.)

Define harm measurement strategies

Apply mitigation techniques in model training and deployment

Implement governance and operations for responsible AI